When you transcribe audio in Norwegian or other languages with a general-purpose model, you're accepting a compromise. Models like openai/whisper-large-v3 are trained on hundreds of languages simultaneously. They're broadly capable, but they're not built for the specifics of Nordic speech.

We're now adding NbAiLab/nb-whisper-large for Norwegian and openai/whisper-large-v3 for general multilingual use, joining our existing KBLab/kb-whisper-large for Swedish. All three run on the same endpoint, on our infrastructure in Stockholm.

The models

NbAiLab/nb-whisper-large — Norwegian

Fine-tuned by NbAiLab on 66,000 hours of Norwegian speech sourced from NRK, Stortinget, and the National Library of Norway. It handles both Bokmål and Nynorsk. Like kb-whisper-large, it significantly outperforms general-purpose models on Norwegian audio.

openai/whisper-large-v3 — multilingual

The current general-purpose Whisper model from OpenAI. Trained on five million hours of audio across 99 languages. If your application serves a broad language mix, or if you need a reliable baseline for a language without a dedicated fine-tune, this is your starting point.

KBLab/kb-whisper-large — Swedish

Fine-tuned by KBLab (the National Library of Sweden) on over 50,000 hours of Swedish speech. On CommonVoice Swedish, it reaches a word error rate of 4.1 compared to 9.5 for whisper-large-v3. That's more than 50% fewer transcription errors on the same audio. On average across benchmarks, KBLab reports a 47% WER reduction versus the general-purpose model.

If you're building for Swedish-language users, this is the right model.

Both Nordic fine-tunes are optimised for their respective languages. If you're transcribing audio in other languages, use whisper-large-v3.

What the API supports

The endpoint is /v1/audio/transcriptions, compatible with the OpenAI Whisper API, so if you're already calling Whisper elsewhere, you can point it at Berget AI without rewriting your integration.

# Swedish

curl -X POST https://api.berget.ai/v1/audio/transcriptions \

-H "Authorization: Bearer BERGET_API_KEY" \

-F file=@audio.mp3 \

-F model=KBLab/kb-whisper-large \

-F language=sv

# Norwegian

curl -X POST https://api.berget.ai/v1/audio/transcriptions \

-H "Authorization: Bearer BERGET_API_KEY" \

-F file=@audio.mp3 \

-F model=NbAiLab/nb-whisper-large \

-F language=no

# Multilingual

curl -X POST https://api.berget.ai/v1/audio/transcriptions \

-H "Authorization: Bearer BERGET_API_KEY" \

-F file=@audio.mp3 \

-F model=openai/whisper-large-v3Beyond the standard API, the endpoint includes WhisperX features:

Word-level timestamps. Pass align=true to get per-word start, end, and confidence scores. Useful for subtitle generation, video editing, and anything that needs precise timing.

Speaker diarisation. Pass diarize=true to get speaker labels (SPEAKER_00, SPEAKER_01, etc.) per segment. Works well with two to four distinct speakers; overlapping speech is a known limitation. Minimal impact on processing time.

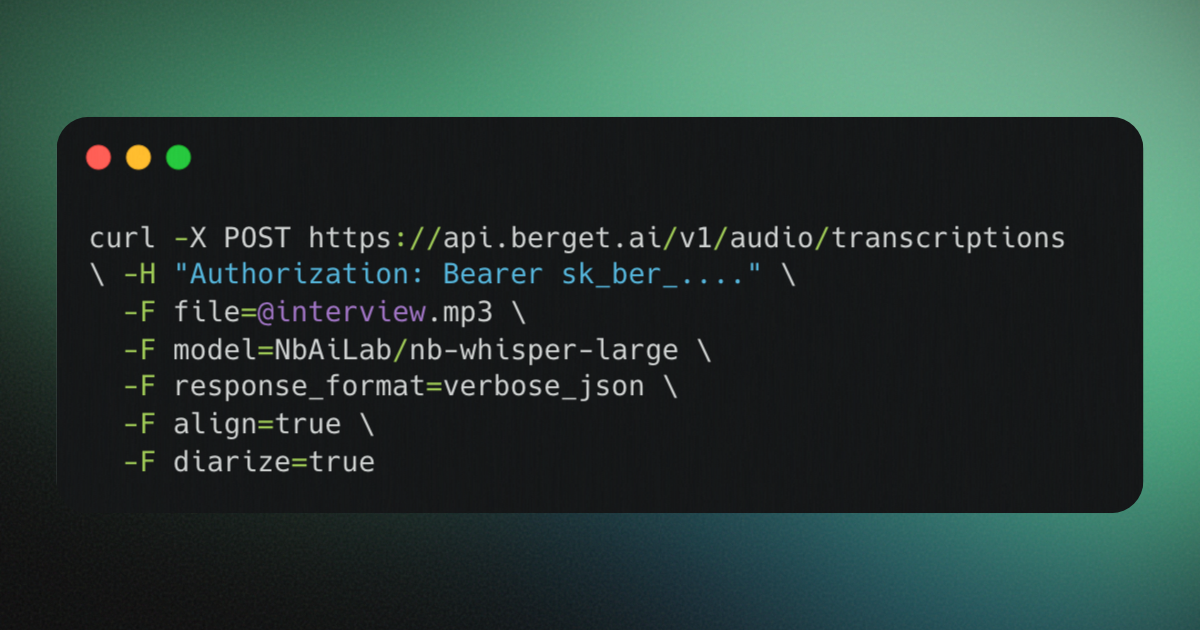

You can combine both in a single request:

curl -X POST https://api.berget.ai/v1/audio/transcriptions \

-H "Authorization: Bearer BERGET_API_KEY" \

-F file=@interview.mp3 \

-F model=KBLab/kb-whisper-large \

-F response_format=verbose_json \

-F align=true \

-F diarize=trueProcessing runs at roughly 15–25x real time depending on file length. Word-level alignment adds around 24% overhead on shorter files; diarisation adds minimal overhead.

Full request and response documentation is available in the API reference.

Why this matters if your data is sensitive

Audio data is personal data. A voice recording can identify a speaker, capture confidential conversations, and fall squarely within GDPR's definition of biometric data in certain contexts. The question of where that audio is processed and under what legal framework is not abstract.

Berget AI runs on our own hardware in Stockholm. Your data does not leave Swedish jurisdiction. It's not subject to the US CLOUD Act or FISA 702, both of which give US authorities legal access to data held by American companies regardless of where the servers sit.

That's true for all three models, including openai/whisper-large-v3. The weights are open; the infrastructure is ours.

Choosing a model

| Use case | Model |

|---|---|

| Norwegian transcription (Bokmål and Nynorsk) | NbAiLab/nb-whisper-large |

| Multilingual or non-Nordic languages | openai/whisper-large-v3 |

| Swedish transcription | KBLab/kb-whisper-large |

All three are available now via the Berget AI API.

For more information about our new models, see the model cards:

Questions or feedback? Join the conversation in our Matrix community.